A look at the Possible Future of AI In Elder Law:The Future Doesn’t Have to be Bleak

“For if the moral law commands that we ought to be better human beings now, it inescapably follows that we must be capable of being better human beings.”

HOOK:

Imagine for a moment that you just got a call from your grandparents, they are so excited to share with you that they have taken a new investment opportunity from Elon Musk himself. You dig a bit deeper, you know that your grandpa hasn't been feeling like himself lately, he was recently diagnosed with dementia, and you can feel his short-term memory slipping week by week. Your grandma excitedly shows you the advertisement that she had pulled up on her iphone, a video starring musk himself with a slight grin as he explains his new crypto scheme that is guaranteed to make you rich. As the video plays on, you notice some slight irregularities, Musk’s hands don’t look quite right, and the shadows don’t seem to be quite how they should. “How much money did you put into this?” you ask in growing horror as you realize this video was generated by AI. “We went all in! We gave them a deed to the house and they took out a reverse mortgage on our behalf in order to get us in on the ground floor!”

WILL AI OUTLIVE IT’S PROBLEMS TO BECOME USEFUL:

In this Article, I will discuss whether the issues with Intellectual Property and potential liability for generative AI will pave a way for a modern age in elder law, or if the house of dominoes will all collapse before anything useful is created.

First, this paper will discuss the relevant history and nature of AI in order to have a grasp of what it is. Then, it will delve into the current legal framework for how AI and the copyrighted work used to train AI has been treated under the law. Finally, a more general analysis of AI will reveal some of the use cases where it can make a real difference for the better. AI is a tool, and much like a weapon, it can be used for good or naught, and fear mongering will only lead to ruin and ensure that the US falls behind its hungry rivals.

INTRODUCTION TO THE NUANCE OF AI:

In order to discuss what the future of AI will look like for elder law, it is imperative to establish what AI is, and how it works. AI is an incredible tool that has the potential to change the way that humans interact with the world, it could enable new scientific discoveries, automate driverless cars, or even serve as companionship for lonely individuals; but maybe Dr. Malcolm from Jurassic Park had a point when he stated “Your scientists were so preoccupied with whether or not why could that they didn’t stop to think if they should.” Along with the great promises of AI, there are some underlying moral and legal issues that are paramount to the success or failure of AI going into the future.

A rather prevalent misconception of AI is that it understands what it is doing; current AI models are rather mislabeled as AI, and are in fact closer to the Computer Science jargon of machine learning. AI, or artificial intelligence implies that the models possess a level of understanding to get to an answer, when reality is closer to guess and check where the model attempts to predict what you want it to say. This may seem arbitrary, but it is a fundamental limitation that explains why it not only hallucinates at predictable intervals given 3 known parameters, but also why it violates other areas of law, mainly intellectual property.

Without going into excruciating detail as it is wildly beyond the scope of this paper, AI predictably produces errors, or answers that do not fit the parameters of sample answers at a predictable rate relative to the dataset, compute, and parameters. This poses several problems, the first of which is the errors. In a recent case, a lawyer failed to review the sources that his AI research generated, causing his case to be deemed frivolous as the cases were entirely made up. Needing to review every minute detail of AI research seriously limits the utility of this time saving research as it can often be harder to spot and fix errors than it is to generate a novel result.

Another problem is that compute is not free, both in terms of the compute itself as well as the energy required to run the compute. The demands for power are so taxing on the current electrical grid, that Amazon and Google are working with several start-ups in order to procure modular nuclear power. Meanwhile, Microsoft is planning on re-activating 3 mile, a massive nuclear power plant that was the location of the “most serious nuclear meltdown and radiation leak in U.S. history.” While nuclear is an excellent source for clean energy, getting past the regulatory nightmare shows how desperate tech companies are to power their servers. Stability AI, the creators behind Stable Diffusion, an AI image generator, went from procuring 32 A100s, to over 5,400 A100s in under a year as demand for their product increased. Each A100 GPU from Nvidia cost a whopping $10,000 each. Not only does AI come with a massive energy problem to solve, but also monumental costs for the GPU’s required to run it.

The final problem is likely the most significant: acquiring data. AI requires massive amounts of data in order to limit the amount of errors. This data needs to be real human data, because as previously discussed, using AI data to train new AI models can have negative effects. In order to procure this data, many companies have been resorting to scraping a massive dataset known as “the pile” which contains thousands of YouTube videos as well as other copyrighted content, in addition to taking video from Netflix. Nvidia employees were concerned about the legality of scraping data, as they were not only using legal sites such as Netflix and YouTube, but also pirated content, or anything else that they could get their hands on. Despite the concerns, Ming-Yu Liu, the vice President of Nvidia considers scraping to be an open legal question and said that “This is an executive decision, we have an umbrella approval for all of the data.”

Despite the sentiment at many tech companies, harvesting scraped content may not be as much of an open legal question as these companies may suggest. While Google claims that

“Our privacy policy has long been transparent that Google uses publicly available information from the open web to train language models for services like Google Translate. This latest update simply clarifies that newer services like Bard are also included. We incorporate privacy principles and safeguards into the development of our AI technologies, in line with our AI Principles.”

Google seems to not even believe this claim themselves as they also penned a deal to pay Reddit $60 million per year in order to harvest that data. Paying for what is essentially a license to use Reddit’s data suggests that the rest of the data that Google is harvesting is not in fact “fair use.” Google’s best argument against these accusations may be that Reddit specifically forbade AI training on their data, as Reddit contains vast amounts of user generated data particularly useful for training Large Language Models (LLM) such as ChatGPT, while other sites may not explicitly deny AI training.

2. AI LEGAL PRECEDENT: WHY IT’S COMPLICATED

While there are still many unanswered questions about the legality of AI, there is some clear precedent. While AI is not able to directly receive copyright, it is unclear how much human intervention will amount to a “modicum of creativity” that copyright requires.

First, in Naruto v. Slater, the court ruled that “the Copyright Act does not ‘plainly’ extend the concept of authorship or statutory standing to animals. To the contrary, there is no mention of animals anywhere in the Act.” In this case, PETA sued on behalf of a monkey who took a selfie arguing that the monkey held the copyright instead of the photographer. However, the court stated that “Nonetheless, we conclude that this monkey—and all animals, since they are not human—lacks statutory standing under the Copyright Act.”. This is strong precedent that any art generated by AI will not be protected by copyright as computers are similarly not people. Zarya of the Dawn, a comic book entirely generated by AI, was temporarily granted a copyright protection, but as soon as the US Copyright Office learned that it was created by Midjourney, the copyright was revoked. However, the US Copyright Office stated, "We conclude that Ms. Kashtanova is the author of the Work’s text as well as the selection, coordination, and arrangement of the Work’s written and visual elements," which creates precedent that parts of the work are protected. However, it is not clear exactly how much human intervention is required to make AI work copyrightable.

One famous example that could potentially give legal support to AI companies scraping all of this data, Google indexed photos posted by an illicit website and had them available as thumbnails bypassing the paywall imposed by Perfect 10 to access their library. Eventually the courts decided that while Google was committing copyright infringement, because the search was for a different purpose than the original images, Google was allowed to continue its practice of indexing the site.

In another instance, Google was sued by the Authors Guild for compiling a list of every book they could get their hands on, which would allow people to search through them in small sections. However, again the courts ruled that the purpose was substantially different and therefore “non-infringing fair uses.”

3. AI IS A TOOL:

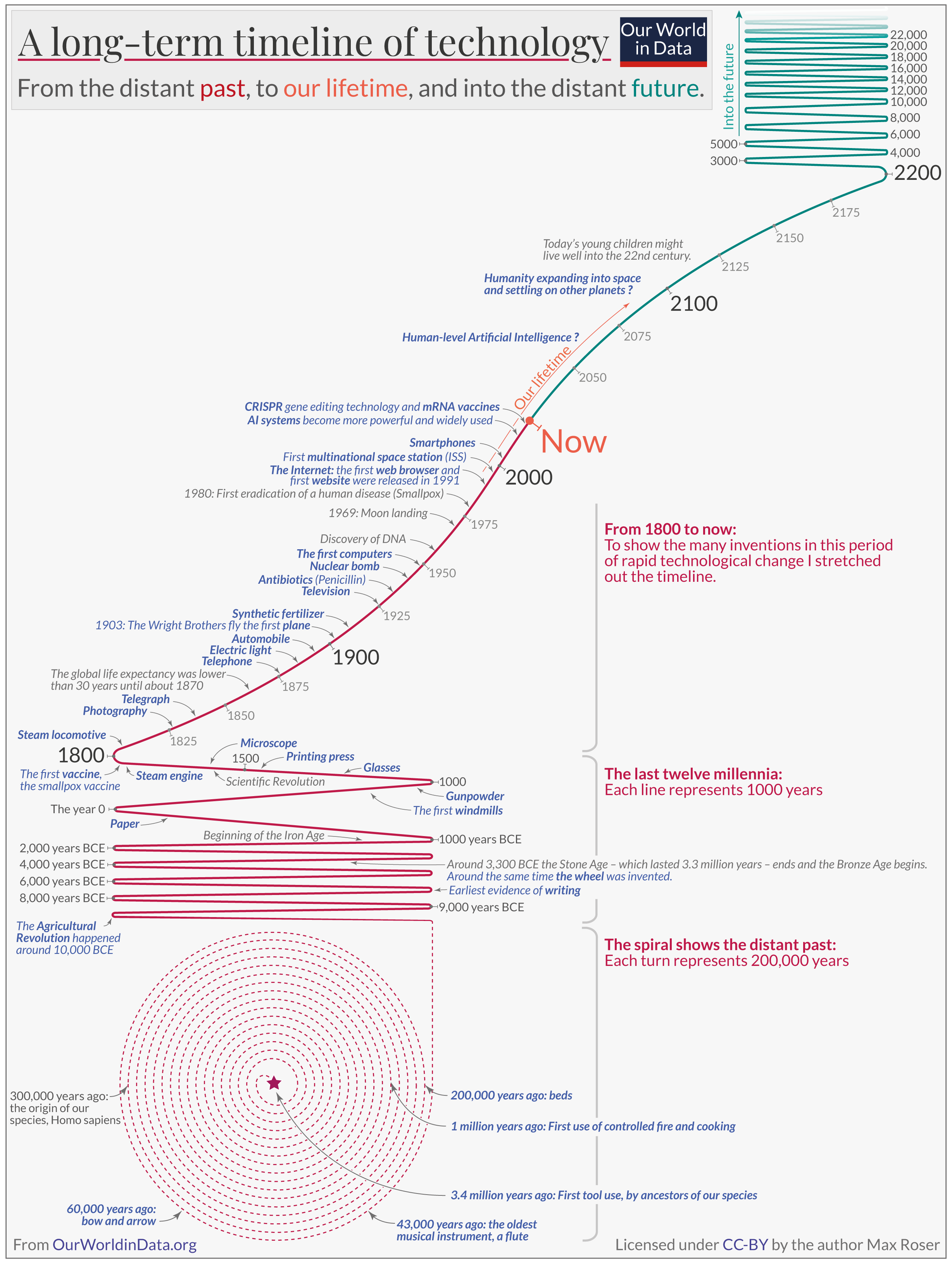

Analogous to guns, AI is a tool that can be used for many purposes. In 1903, the first flight of the Wright brothers lasted for less than a minute, but 66 years later, humanity landed on the moon. In fact, despite the great scientific breakthrough that had taken place “neighbors paid less attention to the history-making feat than if the "boys" had simply been on vacation and caught a big fish or shot a bear.” This story highlights how resistant people are to get on board with new technology, but progress is not only inevitable, it's speeding up.

AI has the potential for substantial positive change in the realm of Elder Law. Cost has always been a substantial factor when considering anything in the legal realm, and this is an area where AI is particularly suited to this task. Many elderly individuals and households are under financial stress due to a fixed and limited income under social security. Catherine Powell, a Cleveland resident, has been thrust back into the workforce at 62 in order to keep up with inflation as Vivian Nava-Schellinger says “Social Security comes up short by at least $1,000 [a month] in many locations. That’s a lot of money for an older adult.” According to US Census Bureau data from 2020, over 40% of Americans aged 56 to 64 lack retirement accounts. The situation is even more concerning for women and non-whites: 56.5% of working-age women, 63.2% of Black working-age Americans, and 71.7% of Hispanic working-age Americans do not have retirement accounts.

Judicial efficiency can help to alleviate this burden from elder law. It’s no secret that the legal process can be costly and often take far too long. As someone who has licensed as a California Insurance Provider for Aflac, I am aware of the numerous issues that people face when dealing with insurance, and how the legal process can be necessary to resolve a dispute. for example, in García-Navarro v. Hogar LA Bella Unión, Inc., the patient died unnecessarily because incomplete medical records prevented a lifesaving blood-transfusion.

While I certainly expect a mountain of pushback for using AI to supplement a judges ability to hear cases, it not only seems like a reasonable proposition, it seems like a much more reasonable proposition than turning away litigants. The judicial system is at a breaking point where there are too many cases that are all too expensive. The expansion of individual rights after the 1950’s, and the Supreme Court’s broad interpretation of the Constitution to uphold the new deal has made the average person rely on the judicial system more and more.

Judicial Activism, defined by the Cornell Legal Information Institute as “Judicial activism refers to the practice of judges making rulings based on their policy views rather than their honest interpretation of the current law. Judicial activism is usually contrasted with the concept of judicial restraint, which is characterized by a focus on stare decisis and a reluctance to reinterpret the law.” Furthermore, Professor Matthew Stephenson, a member of the Harvard Law Faculty that is in charge of The Bulletin, an influential alumni magazine, argues that “It would be a mistake, if you were an economist trying to understand how economic policy worked, to assume that judges are merely neutral enforcers of law,” says Stephenson. “Judges often have a great deal of discretion when they interpret legal documents, and therefore understanding their decision-making processes is important to understanding economic outcomes.”

The current problems of the judicial system are: high costs associated with lawsuits, long lead times for cases to be resolved, lack of judges and staff in order to solve these cases, and a lack of consistency inherent to human intervention. The future problems that are likely to arise from using AI are: an impersonal approach, inherent bias created by whoever programs it, and a black box that obscures legal precedent.

Therefore, a hybrid approach where AI ingests information for new cases and gives a “preliminary result” by using a process similar to the American Law Institute (ALI) when making their Restatements of Law. The Restatements are secondary sources that look to clarify the law by presenting the principles or rules for each specific area. The ALI synthesizes twenty areas of law by providing existing case law and statutes from various jurisdictions. In theory, this group of experts could supplement the current Administrative Office of the U.S. Courts (AO), whose current job is to prevent fraud, waste, or abuse. As the program is assessed, more areas of law could be added to the current 20 that are already in restatement form.

This “preliminary result” would then be reviewed by a subject matter judge who would determine if the result was adequate and then give a “final result.” This system could supplement current cases by having a jury be used for fact finding, and then submitting the facts to the model. With some of the extra time that judges will acquire from this new system, another addition that should be added to the AO are regular subject matter tests for judges. When I was working as a lifeguard manager, part of my job was to reassess the knowledge of lifeguards. In order to get certified as a lifeguard, there was an extensive certification requirement under red cross, but there was no guarantee that people would either retain the knowledge they learned in the 2 years that the certification lost, or be aware of any new developments in best treatments. Law is quite similar in that the bar is a rigorous test that certifies that a person to be employed in that area, and having an appeal system does not entirely alleviate these concerns as it can often not be worth an appeal for numerous reasons including cost, additional attorney fees, time, stress, etc. It seems rather prudent to account for known errors, just as AI is prone to hallucinate, people are prone to make mistakes, and the mistakes of a judicial system should not stand in the way of equitable justice.

If implemented well, this system would have the potential to solve all of the problems previously mentioned. The increased efficiency would promote lower costs and reduce the strain on the judicial system, potentially without the addition of expensive lawyers. There would be consistent results that have a clear precedent rooted in cases and statutes that can be analyzed to ensure the results remain not only fair, but also predictable. The concerns of an impersonal approach are mitigated by having a judge ensure that results are legal and ethical, and the inherent bias of judges and the model can be rooted out effectively by the legal scholars that create these systems.

Another additional use of AI is in the medical industry. The law has usually played a reactionary role in responding to new areas of technology, but the changing tides in the relative speed of innovation make this a great time to introduce new testing methodology. AlphaFold, one of the first truly useful AI models, was able to solve structures for millions of proteins. Before this innovative AI tool, the only way to determine a protein’s structure was via a method known as x-ray crystallography which cost tens of thousands of dollars per protein, and often a single protein was the topic of a PhD thesis. To put this into perspective, over the 6 decades all the scientists in the world had been working on protein structures, they had only come up with about 150,000 protein structures. But, with the introduction of AlphaFold, within a few months the AI model was able to predict about 200,000,000. Yet, as revolutionary as this technology is, the rapid increase in progression is not.

Those solutions for nearly all natural proteins known to exist in nature have directly led to advancements in antibiotic resistance enzymes that allow old drugs to be effective again. Those solutions have led to the development of a malaria vaccine. Those solutions have led to a greater understanding of protein mutations that lead to cancer or schizophrenia. However, these rapid advancements have revealed a serious problem with the current legal system: it's slow, painfully slow.

Of all things, sunscreen is a great example of how our legal framework hampers progress in unpredictable ways. According to the US, sunscreen is a drug, yet while the US often has the most advanced pharmaceutical industry in the world, our sunscreen is far behind due to adverse incentives present in the regulations of this drug. While other countries consider sunscreen to be a cosmetic which has led to many new formulations, the FDA has not approved a change in over two decades, since 1999.

Misaligned incentives caused this sad state of innovation for sunscreen. While strong Intellectual property rights have allowed US pharmaceutical companies to thrive, the story is different for Sunscreen. After the massive amounts of funding from investors and years of testing in order to pass the stringent FDA requirements, there is only 18 months of exclusivity granted.

But what if the approval process and testing could exist digitally? The short answer is that it can’t, but just a few years ago the answer to solving the structure for proteins digitally was also impossible. The problem seems to be rather philosophical in nature; regulation is designed to impose certain scientifically determined minimum standards, even if they are not economically sound. Therefore, the issue with testing sunscreen digitally would be that either the government would need to foot the expensive bill for creating a robust AI system in order to facilitate faster than real time testing to determine the long term effects of the different chemical formulations while having no guarantee that it would ever work. Good luck selling that to the Department of Government Efficiency (DOGE). Potentially even more concerning would be the reality where private companies created this system to test the new formulations. In a world where the best pollsters pride themselves on knowing the outcome of a poll from the wording before it is ever released to the public, would it be prudent to trust that the new testing model wouldn’t favor a favorable result for the people who paid for it? Likely not.

It seems that the more relevant discussion would be to change the incentives of sunscreen intellectual property to match that of the rest of the pharmaceutical world, or declassify sunscreen as a drug. While AI is incredibly handy in the use case it was designed for, its usefulness is greatly exaggerated when applied to more general intelligence applications. Yet the solution to the sunscreen problem is quite dreary; the solution is nuanced and only apparent when looking at the entire problem. There are no easy answers, only careful consideration, yet this way of thinking has fallen out of favor in modern politics in light of the effectiveness of “alternative facts” and sensationalist media.

There is some hope, The Nobel Prize in Economic Sciences 2024 was given to Daron Acemoglu, Simon Johnson, and James A. Robinson for their work in societal institutions and their causal relationship towards a country’s economic prosperity. While some colonial colonies were set up for the long term benefit of the European Migrants, others were exploitative institutions that provided short term gains for those few in power. This research is influential to this discussion as it proves that limiting corruption at every level leads to better results; looking at the real problem in sunscreen requires not passing the problem on to the next person in office. It requires doing unpopular things that may not look good for the next election cycle.

Finally, some potential uses of AI that I do not think would be productive. In an age of social isolation, many people are resorting to AI chatbots in order to feel some sort of connection to another. This is an abomination, but an inevitable one that likely has no remedy. Ozempic has been a smashing success in the weight loss world because it requires nothing from its benefactors. AI solves the problem of relationships in the same way that Ozempic solves weight loss: it’s easy. The solution to weight loss has always required people to change the way that they live; but it's hard to eat right and exercise and results for these recommendations are tellingly low.

Forming a relationship with an AI or using it to trick elderly people into doing so instead of human interaction from friends or relatives is not a suitable replacement for genuine human connection. Similarly, people attempting to “talk to their future selves” or any other sort of gimmick are being tricked and do not understand how AI works. People are deserving of genuine human connection, and there is already legal action against an AI chatbot that caused someone to commit suicide. Yet this should not be regulated against as excess regulation (especially blind regulation) unnecessarily burdens a country’s industry. The United States military has determined that AI is the future, and has contracted with various defense contractors such as Anduril in order to integrate AI into various weapons such as drones; while it may seem that regulating chatbots will not affect these government interests, innovation comes from unlikely places. Scientists recently used information found from studying the unusually warm testicles of Elephants in order to make significant progress in cancer research, which is a connection that the average person is unlikely to guess. Developing AI has become an arms race with the United States imposing restrictions on high end graphics cards in order to slow the development of AI in China, yet Deepseek, a Chinese AI program, already competes and beats American models in many relevant tests. Any restriction on AI development has the potential to have American companies fall behind their Chinese counterparts with less regulations.

CONCLUSION:

AI has the opportunity to usher in a new era of prosperity and fairness to everyone. Just as easily as AI can be used to scam your grandparents out of their money with fake videos of Elon, it can also help them to get more efficient healthcare, a more equitable and quicker judicial system, and maybe even better sunscreen to prevent cancer and not look washed out.

Whether savory or not, AI will pave the way for modern elder law, and anyone that does not get on board will be left behind. While there are numerous challenges to the ethics and legality of training these models, the writing is on the wall that this cutting edge technology is here to stay. Technology is a never ending arms race to protect people from scammers, and AI is the obvious next step in that war. AI has the potential to radically change the justice system for the better, creating a more just and efficient system that all can benefit from. Insurance is already going through the process of integrating AI, which can lead elder law into a cheaper and more efficient future with less litigation to receive benefits. Various documents involved in elder law such as wills and advance directives can be streamlined in order to offer new ways to efficiently offer services to an ever-expanding clientele.

Finally, AI chatbots are not a replacement for genuine human connection, and should not be considered an alternative for dealing with an aging population that understands less and less about the world around them. (not sure how to put this part, I am implying that everyone is a victim to the ever increasing advance of technology, and people can not possibly hope to keep up). The Intellectual Property issues of AI will be resolved as there is too much money at stake even if the law is currently unclear. AI will get better over time, and people are already finding methods to reduce or get rid of the hallucination problem modern AI has. AI will continue on and be used to change the shape of law and the legal field, we need to either get on board or get out of the way.